What is Ground Truth Data?

Ground truth data is data collected at scale from real-world scenarios to train algorithms on contextual information such as verbal speech, natural language text, human gestures and behaviors, and spatial orientation. The broad use of the term “ground truth” is derived from the geological/earth sciences to describe the validation of data by going out in the field and checking “on the ground.” It has been adopted in other fields to express the notion of data that is “known” to be correct.

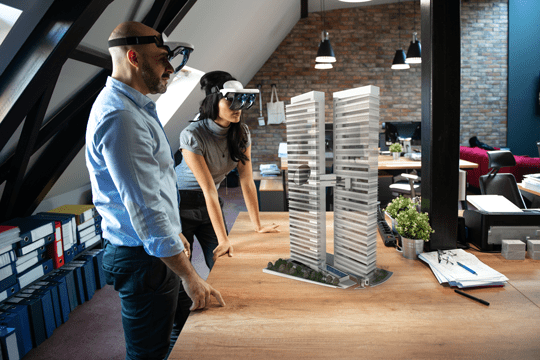

The human-to-machine interface is moving from mice and keyboards to touchscreens, gestures, facial recognition, voice commands, and beyond, transforming the way we engage with machines, data, and each other. This revolution is driven by artificial intelligence (AI) and machine learning (ML) algorithms that rely on accurate ground truth data to produce effective recognition of the real world.

With the advent of AI and ML, ground truth data has assumed a critical role in technology, with what is expected to be a lucrative market opportunity. QYR Research reported that in 2018, the global artificial intelligence market size was $349 million and is expected to have a compound annual growth rate of 51.1 percent during 2019-2025. The implications for business and consumers are profound.

Products that rely on AI and ML technology include smart home technology, virtual and augmented reality headsets, and self-driving cars, just to name a few. There are numerous industries that are discovering the benefits as well, including financial services, retail, creative arts, energy, healthcare, and manufacturing. And we are only in the early stages of AI and ML technology use.

Why is Ground Truth Data Collection Important?

Ground truth data collection is crucial for companies who develop products around AI and ML that ultimately have human interaction associated with them. The performance of such products relies on the quality of the data, which drives the effectiveness of AI and ML algorithms. If data is not collected and subsequently managed correctly, the algorithms will fail, and the user experience will be degraded.

Four Tips for Successful Ground Truth Data Collection and Management

- How much to do and how much is enough: While you can’t scan seven billion people around the world for practicality’s sake, you do need enough data to ensure that the algorithms work correctly. When not enough data has been collected for facial recognition, for example, a device such as a cell phone will not accurately distinguish between different faces.

- Data capture is not easy: It is a common assumption that ground truth data capture is easy, and, as a result, not enough time is spent on the planning part of the process. Indeed, the most critical step is to fully understand what kind of data is needed and all the associated parameters before executing. If 2,000 participants are needed for a data collection project, determining how and where to find those people will require planning and research. If 10,000 homes are needed to be scanned, how do you go about finding them, and in which geographical areas? It would be easy to limit the project to one area, but home designs vary, so you should consider a cross-section that includes, for example, Dallas, New York, Chicago, Atlanta, and San Francisco or international if the product has an international focus. Failing to work through this process in a methodical way will only lead to extra cycles, time, and money to get the right data.

- There is no standard: Another misconception is that there is, or should be, a standard for data capture. But each project is unique, based on the product and the scenarios needed for optimizing it. One can standardize the execution aspect, but only after carefully when planning and designing the data capture process.

- One is not done: Some may assume that the data collected can be used for other, future scenarios by simply changing “algorithms.” However, inevitably, the first data set will not fill every “gap,” depending on the scenarios you have put in place. For example, you may have captured voice recognition data on the West Coast; in that case, you may have a problem when the product is used in the Midwest, South or internationally, where the typical accent is noticeably different.

The Q Analysts Approach

There are several challenges to managing Ground Truth Data collection. These include:

- Partnership: When beginning a new project, it’s important we make a full evaluation at the onset, and that means asking the right questions. Identifying the right number of participants for a project is a first step but asking follow-up questions related to skin color or race, eye color, geography, environmental factors, and so forth will result in a thorough understanding of our clients’ requirements. At that point, we can guide them in determining what the optimal parameters will be for capturing the highest-quality, best-fit data and ensuring that the budget is scoped appropriately.

- Experience: Q Analysts has significant expertise in initiating, creating, and delivering a variety of data collection initiatives in the areas of verbal speech, human gestures, and inanimate objects and spaces. The knowledge base we have gained from designing and executing more than 100,000 data captures scenarios creates efficiencies that benefit our clients, saving them money in the long run. This knowledge, in combination with our best practices for creating a successful data collection program, offers assurance that we are delivering the highest-quality data that requires no additional verification.

- Design and Refine: With vast experience in speech, human, and space data collection, We are well-equipped to design the right program from the ground up, customized to each client – one size does not fit all. This experience also allows us to think creatively and refine the process. Before launching any project, we conduct a pilot to ensure the project is on track to achieve the best results. In one case, a client wanted to have a device tested for speech recognition at different angles. Instead of conducting discrete tests using one device, we suggested investing in four devices to perform multiple tests simultaneously, which saved labor and time, and delivered more accurate results with just one measure.

- Logistics and Execution: The logistics of data collection can be very complex (see Natural Speech Data Collection case study), requiring a knowledge of demographics, for example, and where to find them. Once the required demographic is identified, how do you reach those individuals and incent them to participate? This is just the tip of the logistics iceberg. Our many years of execution experience, along with a network of resources, allow us to do the heavy lifting on our clients’ behalf.

- Data Privacy & Security: This is one the biggest areas of risk for firms consuming data from human participants. Several recent news articles have exposed practices that have lawmakers around the world taking note of potential privacy and security risks associated with human data collection. Mitigating risk and exposure in this area takes experience and understanding of best practices for handling sensitive data. With our ISO 27001 InfoSec certification

our approach to new projects accommodates the specific needs of that project, while meeting strict security policies outlined below.- Project devices and equipment that contain sensitive and confidential data must always be secured. It’s also important that capture and storage devices must always be securely stored away when not in use.

- Participants involved in any data collection program or project should be required to sign an NDA prior to participating. They should be aware that the data they are providing is going to be used in some AI application but also be assured that it will be handled securely and de-identified at some stage, if not at the collection stage itself.

- When capture and storage devices are in use they must always be supervised and monitored during daily operation.

- Participants are not allowed to view the device back end systems to insure complete data privacy. Guidelines restricting access to data collected should also be in place.

- Data should not be shared with any parties who do not have permission to access the data and should be accessed only when necessary to perform job functions.

- Any moving or manual transfer of data (i.e. physically moving drives to other locations, handing off forms, etc.) must first be logged in the appropriate tracking form and managed by authorized personnel. Any transferring of data over the internet should be performed only on a secured network and overseen by authorized personnel.

- Data security policies must be held to a strict standard and should always be followed. If data security processes are breached, it could result in employee termination or even a complete project shut down.

Conclusion

The world will become increasingly interactive with machines, which are growing ever more intelligent in terms of making decisions or suggestions. Self-driving cars, home automation, computer interaction, intelligent cameras, and many more examples of AI and ML algorithms at work illustrate this paradigm shift. Ground truth data is the foundation on which these products are developed.

We hold data security policies to the strict ISO 270001 standards, and further based upon client requirements.